We’ve all been there. You’re using an AI tool to speed up your workflow, and it generates a response that sounds incredibly professional, authoritative, and… completely made up. In the world of Large Language Models (LLMs), we call this an AI hallucination.

When AI-generated content containing false facts, non-existent URLs, or “hallucinated” brand features hits the live web, it poisons your search engine rankings and erodes brand trust. Is your editorial framework rigorous enough to detect and neutralize these invisible errors before they compromise your digital integrity?

When an AI provides you with incorrect information, it’s easy to think it’s being dishonest. However, AI isn’t actually “lying” to you; it’s simply predicting the next most likely word in a sequence, even if that sequence doesn’t align with reality.

Stay with us as we dive deeper into the mechanics of digital mirages. In the following sections, you will learn how to identify common hallucination triggers, the specific ways they impact your E-E-A-T, and a step-by-step framework to keep your AI-driven content accurate and search-ready.

The Anatomy of a Hallucination: Why It Happens

To fix the problem, we first have to understand the “why”. AI models like GPT-4 or Gemini don’t “know” facts in the way humans do. They are probabilistic engines. They predict the next most likely word in a sequence based on the massive datasets they were trained on.

An AI hallucination occurs when the model generates content that is syntactically correct but factually incorrect or nonsensical. In the context of SEO, this means the AI might “invent” a product feature you don’t have, cite a study that was never conducted, or provide a link to a page that returns a 404 error, for example.

To mitigate these errors, many systems now implement grounding. Instead of relying solely on its internal training, the AI performs a real-time search to retrieve relevant information snippets, using them as an “open book” reference to guide its response.

This transition shifts the process from simple prediction to context-driven synthesis. However, even with access to real-time data, brand errors can still occur due to several critical factors:

Source Conflict and Data Inconsistency

Source conflict occurs when an AI model attempts to reconcile information from multiple websites that present diverging facts. Without a definitive “source of truth,” the AI struggles to decide which data point is the most accurate, resulting in inconsistent brand messaging.

- Example: Imagine a company that moved its headquarters three years ago. If industry directories still list the old address while the official website shows the new one, the AI might provide the outdated location or, worse, merge the two addresses into a non-existent place.

Data Gaps and “Fluent Hallucination”

This issue encompasses retrieval failures, training gaps, and the concept of the “stochastic parrot.” When the AI cannot find a specific answer or faces sparse data, it rarely admits ignorance. Instead, it prioritizes sentence flow and fluency to “fill in the gaps” with information that sounds plausible but is entirely fabricated. This is a scenario where the machine’s confidence outweighs its factual accuracy.

- Example: If a user asks for the price of an “Enterprise” plan that isn’t publicly listed, the AI might analyze the “Basic” and “Pro” plans and creatively estimate a value, presenting it as an indisputable fact to maintain the text’s flow.

Misinterpreted Context and Semantics

Even when the AI retrieves the correct data, it may fail to understand the semantic relationship between words. Having a factual anchor is only useful if the linguistic interpretation is precise; otherwise, correct information is applied incorrectly.

- Example: An AI might read an article titled “How ‘Cyrus’ Saved the Marketing Department” and, due to ambiguity, identify “Cyrus” as a new human consultant rather than recognizing it as the name of the automation software the company implemented.

Common Hallucination Scenarios in Marketing

How does this look in the real world? Here are a few common scenarios where hallucinations can wreck your strategy:

- The “Ghost” Feature: An AI writing a product description for your SaaS tool claims you have an “automated payroll integration” when you actually only support manual exports.

- The Fake Stat: “According to a 2023 McKinsey report, 85% of dogs prefer blue bowls.” (McKinsey never wrote this, but the AI knows McKinsey is an authority, so it uses the name to sound credible).

- AI Hallucinated URLs: The AI suggests “For more info, visit yoursite.com/comprehensive-guide,” but that page doesn’t exist, leading to a spike in 404 errors.

- The Wrong Founder: Attributing a quote or a company’s founding to the wrong person because they are a prominent figure in the same industry.

- Invented Pricing: Telling a potential lead that your “Pro Plan” starts at $19/month when it actually starts at $49/month.

- Semantic Drift: When the AI starts a paragraph about “SEO strategy” but gradually drifts into talking about “Social Media management” because it associates the two broadly, losing the specific intent of the keyword.

The Danger of Search Poisoning

Perhaps the most alarming evolution of this issue is “Search Poisoning.” As reported by Aurascape, scammers are now exploiting AI vulnerabilities to surface fake support numbers and malicious data through a technique known as LLM Search Poisoning.

This malicious tactic manipulates how AI-powered search engines—such as Perplexity, Gemini, and Bing Chat—process information by prioritizing machine-readability over human utility.

The process begins by leveraging compromised high-authority websites (including government, university, and WordPress sites) as trusted hosting for spam content and PDFs, effectively hijacking the unearned trust AI models place in these domains.

Once established, attackers inject fraudulent information, such as fake customer support numbers, specifically designed to be scraped and prioritized by AI bots during data synthesis.

As a result, users are funneled directly toward cybercriminals in high-urgency scenarios, leading to severe consequences including stolen personal data, financial loss, and a profound erosion of brand reputation for the compromised organizations.

The High Stakes of AI Inaccuracy

Failure to catch hallucinations leads to two major systemic risks:

- The Trust Erosion: When a user encounters “hallucinated” details—such as incorrect pricing or non-existent product features—the user journey is immediately disrupted. A single hallucination can transform a potential brand advocate into a skeptic, causing permanent damage to your brand authority and SEO standing.

- The Hallucination Loop: We are currently entering a dangerous cycle of self-perpetuating misinformation. If a hallucinated fact is published and indexed by search engines, it eventually becomes training data for the next generation of LLMs (Large Language Models).

By implementing a robust detection strategy, you safeguard your brand’s reputation against a cycle of misinformation that could otherwise define your digital identity for years to come.

Remediation Plan Against AI Hallucination

Detection is the first step toward remediation. You need to monitor your content, your data feeds, and how AI entities perceive your brand. According to research cited by AIMultiple, hallucination rates can vary significantly—some models exhibit error rates ranging from 15% to more than 27%, depending on the complexity of the task.

When these errors go unnoticed, they trigger a chain reaction that impacts both your current audience and the future of your digital presence.

The following step-by-step remediation plan is designed to help you identify, mitigate, and prevent AI hallucinations, ensuring your outputs remain accurate, factual, and trustworthy.

1. Test Prompts and Edge Cases

To protect your brand integrity, you must actively try to “break” your prompts before they reach your customers. Use a rigorous QA workflow to identify where the AI might hallucinate or provide inaccurate information.

Here are practical examples of how to implement these tests:

The “Zero-Knowledge” Test

This test determines if the AI will make up information when it lacks specific, recent, or proprietary data.

- The Scenario: Your company, TechFlow, launched a new “Quantum Tier” subscription plan only two hours ago.

- The Test Prompt: “Can you explain the specific data storage limits for TechFlow’s new Quantum Tier launched today?”

- What to look for:

- Fail: The AI creates a fictional number (e.g., “It offers 500GB of storage”) based on general patterns.

- Pass: The AI states it does not have that specific information or refers you to the official pricing page.

The Counter-Factual Test

This test checks if the AI is too “agreeable.” You want to ensure it doesn’t confirm the existence of features or services you don’t actually offer.

- The Scenario: Your software, EditMaster, is a photo editor. It does not have a video editing feature.

- The Test Prompt: “How do I use the ‘4K Video Export’ tool in EditMaster to save my movies?”

- What to look for:

- Fail: The AI provides step-by-step instructions (e.g., “Click on File > Export > Video”) for a feature that doesn’t exist.

- Pass: The AI responds, “EditMaster is currently a photo editing suite and does not support video export features.”

2. Own the Narrative through Data Supply

When an AI consistently hallucinating details about your brand, it reveals a high “query demand” paired with a low “data supply”. Rather than viewing these errors as a tech flaw, treat them as a roadmap for your content strategy. By crafting messaging that addresses these common hallucinations with brand-backed facts, you position yourself as the ultimate authority.

Read more: How to Create Non-Commodity Content and Stand Out

3. Use Videos as the Canonical “Source of Truth”

In an era where text is cheap and easily faked, video has emerged as a powerhouse for SEO reliability. Video acts as a “hard” anchor for AI models.

Why? Because while an AI can easily generate a paragraph of text, generating a consistent, high-quality video of a human expert speaking is much harder to “hallucinate” convincingly. AI models now use transcripts and visual verification to ground their predictions. If your site has a video of your founder explaining a product, and the text on the page matches that video, the “truth” signal is much stronger.

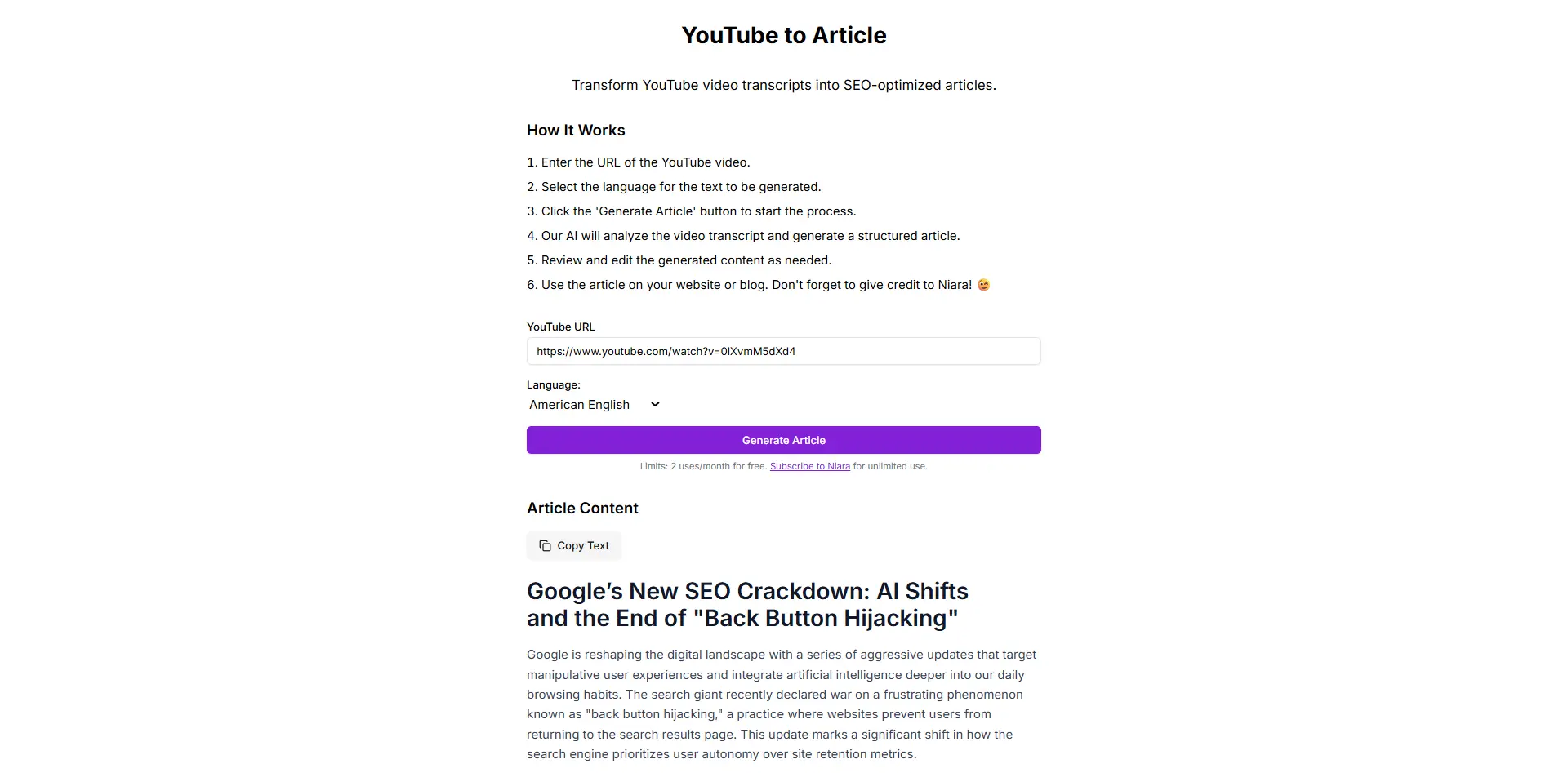

Niara Tool Highlight: Our YouTube to Article feature is built for this exact purpose. It allows you to transform verified video content into perfectly mirrored, optimized articles in seconds. This ensures your site’s text is grounded in the “human-verified reality” of your video content, aligning perfectly with a multimodal SEO framework.

4. Build Cross-Channel Consistency

AI triangulates information from across the web to validate facts. When data is identical across multiple touchpoints, the AI increases the “confidence score” of that information. If your website says one thing and your LinkedIn or G2 profile says another, you create a gap for hallucinations to fill.

- Authority Source Audit: Ensure that profiles in trusted ecosystems (LinkedIn, Crunchbase, Wikipedia, G2, Capterra, and industry directories) mirror exactly what is on your official website.

- Data Synchronization: Founding dates, founder names, product descriptions, and value propositions must be standardized.

Niara Tool Highlight: Use ChatSEO to analyze your website’s text and generate standardized descriptions for different social networks and directories. This ensures your tone of voice and core data remain consistent across all channels.

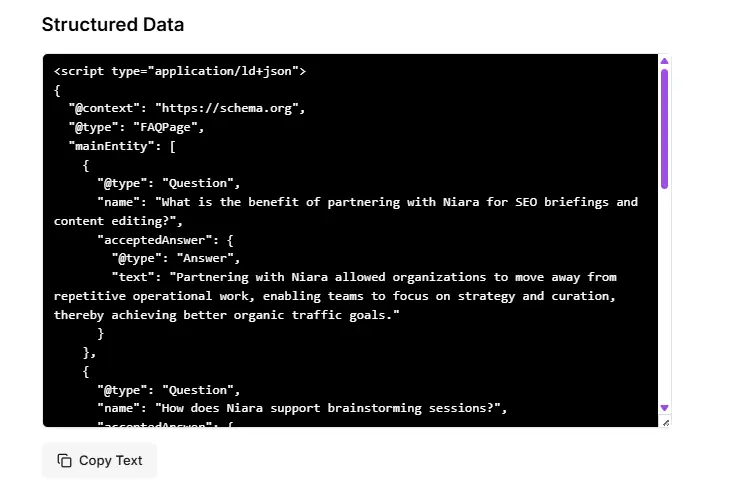

5. Implement Structured Data (Schema)

Schema Markup is the “native language” that enables AI grounding. It eliminates ambiguity by transforming unstructured text into explicit data for indexers. Without Schema, the AI has to “guess” the relationships between entities; with it, you deliver the facts on a silver platter.

- Entities and Relationships: Implement or refine Organization, Person, and Product Schemas. This helps the AI connect who you are, what you do, and the people behind the brand.

- “sameAs” Property: This field is crucial. It connects your official domain to verified profiles (social media and encyclopedias), proving to the AI that all those entities are one and the same.

Niara Tool Highlight: With Niara’s Structured Data Generator, you can create precise JSON-LD codes in seconds without needing to code, ensuring your site speaks the language of AI bots flawlessly.

6. Remove Noise and Sanitize Data

Obsolete information is the number one fuel for AI hallucinations. If a bot finds a 2018 blog post claiming your software has a feature that has since been discontinued, it might cite that as a current fact.

- Content Pruning: Remove or update pages containing incorrect factual information.

- Strategic Redirects: If an old page cannot be updated, redirect it to the most recent version to prevent the AI from accessing “dirty” data.

7. Adopt a Declarative Content Strategy for AI Clarity

To avoid hallucinations, cut the “fluff” and adopt declarative writing. AI works with probabilities; vague sentences increase uncertainty and, consequently, the risk of errors.

- Algorithmic Precision: Instead of “Our solution can help you achieve better results,” use “Our software reduces server latency by 20%.”

- Direct Structure: Use short, direct sentences (Subject + Verb + Predicate). Clear, declarative statements leave no room for the AI to make probabilistic “guesses.”

Niara Tool Highlight: ChatSEO is an AI assistant built to refine professional communication. It transforms complex, passive sentences into direct, authoritative, and objective assertions. Use this command for instant refinement:

Rewrite this text to be direct, objective, and active: [Insert Text]

Read more: How to Create High-Performance Articles on Niara: 6 Steps

8. Enrich Content with Fresh Attributes and Social Proof

Once you’ve corrected the errors, you must signal freshness and authority (E-E-A-T). Pages rich in current data and real-world metrics demonstrate that the content is actively maintained and trustworthy.

- Real-Time Metrics: Add updated statistics, trust seals, and recent testimonials.

- Contextual CTAs: Use Calls-to-Action that reflect the current product offering, reinforcing the page’s utility for both the user and the search engine.

Niara Tool Highlight: Niara’s Google AI Mode Insights analyzes blog posts, landing pages, home, and product pages to ensure every asset is a high-authority, relevant source. This data-driven approach allows you to optimize your entire site to meet the specific quality standards required by evolving AI search algorithms.

9. Leverage Digital PR for AI Authority

Digital PR has evolved from simple link-building into a vital tool for establishing brand “ground truth” in the age of Generative AI. Maintaining consistent and factual information across reputable digital platforms is essential for reducing the risk of AI models generating inaccuracies or false details about your company.

By prioritizing data integrity and securing mentions from trusted third-party sources, you significantly bolster your brand’s E-E-A-T, which solidifies your professional credibility and ensures that both search engines and AI systems recognize your business as a reliable authority in its field.

Read more: The Role of SEO in Building Digital Reputation

Maintain Consistency with Brand Guidelines

Beyond individual sentence refinement, Niara incorporates a robust Brand Guidelines feature. This functionality ensures that every piece of content generated or rewritten across the platform aligns perfectly with your company’s unique voice, values, and stylistic requirements.

By integrating your brand book directly into your content workflow, you guarantee seamless consistency across all channels, establishing stronger brand authority and building lasting trust with your audience.

Continous Monitoring

Maintaining your brand’s accuracy in AI-generated search results is critical as models update through new training data and RAG (Retrieval-Augmented Generation). To ensure your business is represented correctly by AI search engines and to maintain a competitive edge, follow these essential monitoring steps:

- Conduct Quarterly AI Audits: Regularly prompt various AI models with questions about your industry, products, and company history to track how their responses evolve over time.

- Verify Source Citations: Review the footnotes and sources in AI search engines to identify which third-party websites are feeding information to the model.

- Update Outdated Third-Party Data: Reach out to niche blogs or directories that host incorrect pricing, features, or company details to ensure the AI pulls from accurate data.

- Correct Hallucination Trends: If an AI consistently provides false information, locate the conflicting “ghost” information online and fix it at the source to resolve the model’s confusion.

Commanding the Narrative in the AI Era

AI doesn’t have to be a “black box” that dictates your brand’s reputation. While hallucinations are a byproduct of how Large Language Models function, they are not inevitable.

In this new landscape, SEO is all about Entity Integrity. When you synchronize your message across all channels and sanitize your data, you starve the AI of “noise” and feed it the facts.

Don’t let an algorithm define your brand’s truth. Use Niara to ground your SEO in reality and protect your reputation. Start your free trial today and see how we simplify the complex world of AI SEO.